The role of bias in Neural Networks

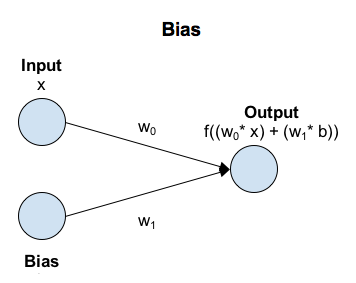

The activation function in Neural Networks takes an input 'x' multiplied by a weight 'w'. Bias allows you to shift the activation function by adding a constant (i.e. the given bias) to the input. Bias in Neural Networks can be thought of as analogous to the role of a constant in a linear function, whereby the line is effectively transposed by the constant value.

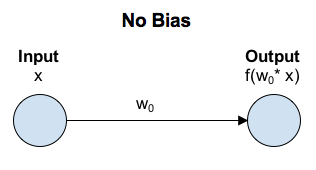

In a scenario with no bias, the input to the activation function is 'x' multiplied by the connection weight 'w0'.

In a scenario with bias, the input to the activation function is 'x' times the connection weight 'w0' plus the bias times the connection weight for the bias 'w1'. This has the effect of shifting the activation function by a constant amount (b * w1).