Difference between Linear Regression and Logistic Regression

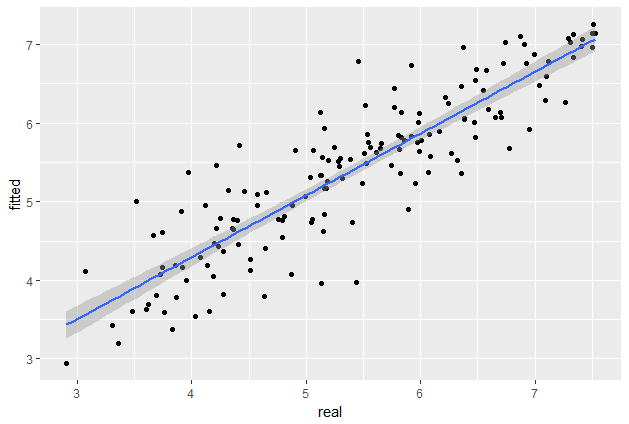

Linear Regression uses a linear function to map input variables to continuous response/dependent variables. Once fitted, a Linear Regression model can be used to predict the values of response/dependent variables for new values of the input variables. An example application, in a financial trading analytics context, might be to predict the total value of trades that will occur by end of day based on the number and size of orders that have been submitted so far. The output of Linear Regression is a continuous value, and due to the use of a straight line to map the input variables to the dependent variables, the output can be any one of an infinite number of possibilities. This means that outputs can be positive or negative, with no maximum or minimum bounds.

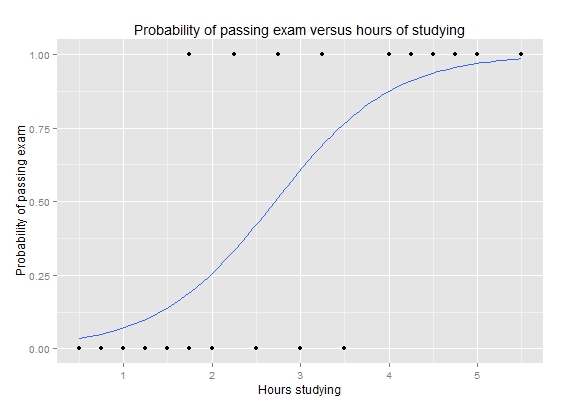

Logistic Regression uses a logistic function to map the input variables to categorical response/dependent variables. In contrast to Linear Regression, Logistic Regression outputs a probability between 0 and 1. In essence, Logistic Regression estimates the probability of a binary outcome, rather than predicting the outcome itself. Logistic Regression is typically used for binary classification problems, where the output is the probability of the given input belonging to a categorical target class. For example, threat detection for cybersecurity aims to distinguish between benign and suspicious patterns of host and network activity; this is a binary classification problem that can potentially be tackled using logistic regression (among other techniques).